We’ve all seen it. That little spinning circle that pops up at the worst possible moment. It’s called buffering, and it’s the universal sign that your video stream has ground to a halt.

But what’s actually happening behind the scenes? Simply put, a streaming video buffer is a small, reserved chunk of your device’s memory. It works by downloading a few seconds of video ahead of the part you’re currently watching.

Think of it as a safety net. This pre-loaded content ensures your video keeps playing smoothly, even if your internet connection hiccups for a moment. When that safety net runs out before the next piece of video arrives, you get the dreaded spinning wheel.

Decoding the Dreaded Spinning Wheel

You know the feeling. You’re completely absorbed in a movie’s climax, the tension is unbearable, and—bam. The video freezes. That spinning icon completely shatters the experience. This interruption is technically called rebuffering. It’s the moment your video player has played through all its pre-downloaded content and is now frantically trying to download more.

I like to use a relay race analogy. Imagine your internet connection is the first runner, carrying chunks of video data. It passes the baton to the second runner—your device’s buffer. The buffer then hands it off to the final runner, the video player, which puts the action on your screen.

As long as the internet runner is faster than the video player, the race is smooth. But if your internet connection stumbles, the buffer quickly runs out of batons to pass, and the whole show stops.

Why Does Buffering Still Happen?

With high-speed internet being so common, it’s fair to ask why we still deal with buffering at all. The truth is, streaming is a complex process. It requires a whole chain of systems to work together perfectly, and a weak link anywhere can cause that frustrating pause. The main challenge is getting a massive amount of data from a server—which could be on the other side of the world—to your screen without a single interruption.

It all boils down to a few key factors:

- Download vs. Playback Rate: The golden rule is that your device must download the video data faster than it plays it. If the playback rate catches up to the download rate, the buffer empties.

- Network Stability: Raw speed isn’t everything. An internet connection that constantly fluctuates is a major cause of rebuffering, even if its peak speed is high.

- Content Quality: Streaming a 4K video requires a ton more data than a standard-definition one. A connection that works just fine for a 720p stream might struggle to keep up with the demands of ultra-high definition.

A buffer isn’t just some technical feature; it’s the very mechanism that makes smooth streaming possible. It takes the chaotic, unpredictable nature of the internet and transforms it into a steady, reliable viewing experience.

Grasping this concept is the first real step toward fixing buffering problems. In the rest of this guide, we’ll dig into the common culprits behind these interruptions and explore the smart technologies, like those in LiveAPI’s streaming platform, that are built to stop them before they start.

Understanding How a Video Buffer Works

At its heart, a streaming video buffer is a small, temporary holding area for video data on your device. Think of it like a safety net. Your video player doesn’t just download and play content second-by-second; that would be a recipe for disaster.

Instead, it downloads a few seconds of video ahead of where you’re currently watching and stores it locally. This gives you a cushion, a reserve of content that keeps the video playing smoothly even if your internet connection hiccups for a moment. Without that buffer, any tiny network flutter would instantly freeze your stream.

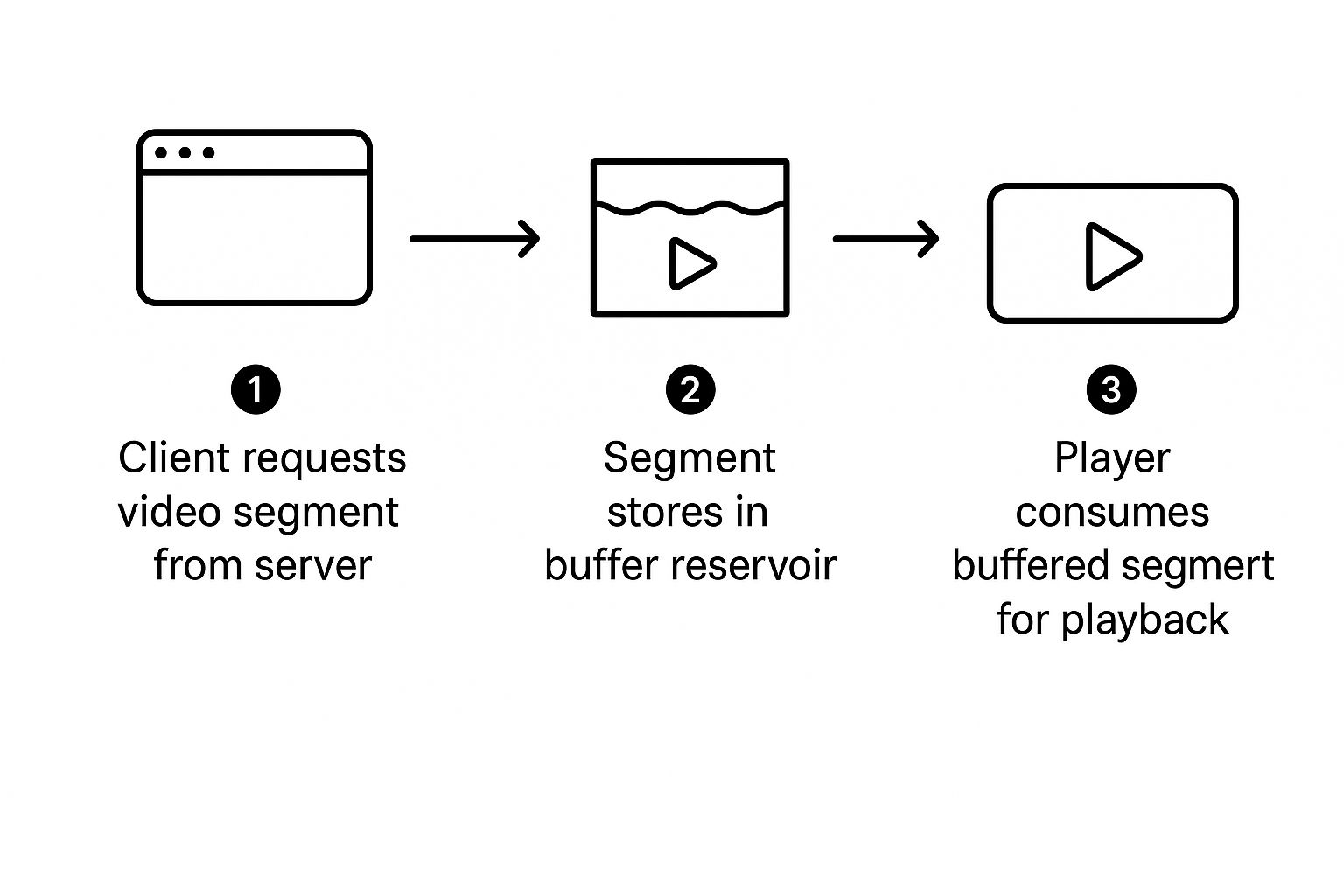

The Journey of a Video Chunk

So, what happens when you hit play? Your device doesn’t try to download the entire two-hour movie at once. That would take forever. Instead, it requests the video in small, manageable pieces called “chunks” or “segments.”

This chunking method is why videos can start playing almost instantly. Your player grabs the first few chunks, lines them up in the buffer, and starts playback while simultaneously working to download the next set of chunks.

It’s a continuous cycle:

- Request: Your player asks the server for the next piece of the video.

- Download: The server sends that data packet to your device.

- Store: The newly arrived chunk goes into the buffer, waiting its turn to be played.

This process repeats over and over, with the player always trying to stay a few steps ahead of what you’re seeing on screen. The infographic below breaks down this simple but powerful three-step loop.

This constant flow—from the server, into the buffer, and onto your screen—is the fundamental engine that drives all modern video streaming.

The Balance Between Download and Playback Speed

For a smooth, uninterrupted experience, one golden rule must be followed: your download speed has to be faster than the video’s playback speed. If you’re watching a stream that plays at 6 Mbps (megabits per second), your internet connection needs to deliver data faster than 6 Mbps to keep that buffer filled up.

The moment your download speed dips below the playback rate, trouble starts. The player begins using up the buffered video faster than it can be replaced. Once that reserve is completely drained, the video stops dead. That’s rebuffering—the dreaded spinning wheel that appears while your player frantically tries to download the next chunk to get things moving again.

The size of the streaming video buffer is a delicate balancing act. A larger buffer provides more protection against network issues but results in a longer initial startup time for the video. A smaller buffer starts the video faster but is less forgiving of unstable connections.

Modern streaming platforms, including those built with developer tools like LiveAPI, handle this balancing act for you. They use smart technologies to adjust the stream in real-time, working behind the scenes to make sure the buffer never runs dry. Grasping this core concept is the first step to understanding why streams fail and how to build systems that deliver a flawless viewing experience.

The Common Culprits Behind Rebuffering Issues

When your video grinds to a halt, it’s natural to point the finger at your internet provider. And sometimes, that’s fair. But the reality is that the problem could be hiding anywhere along the incredibly complex path the video takes from the server to your screen. A streaming video buffer that runs dry is just the symptom, not the actual disease.

The root cause usually falls into one of three main buckets. By understanding these, you can start to diagnose why that dreaded spinning wheel pops up and, more importantly, figure out how to make it go away. Let’s dig into the usual suspects.

Client-Side Problems: Your Device and Connection

More often than not, the first place to look for trouble is right in your own home. These are what we call “client-side” issues because they happen on your end of the streaming equation—the viewer’s side.

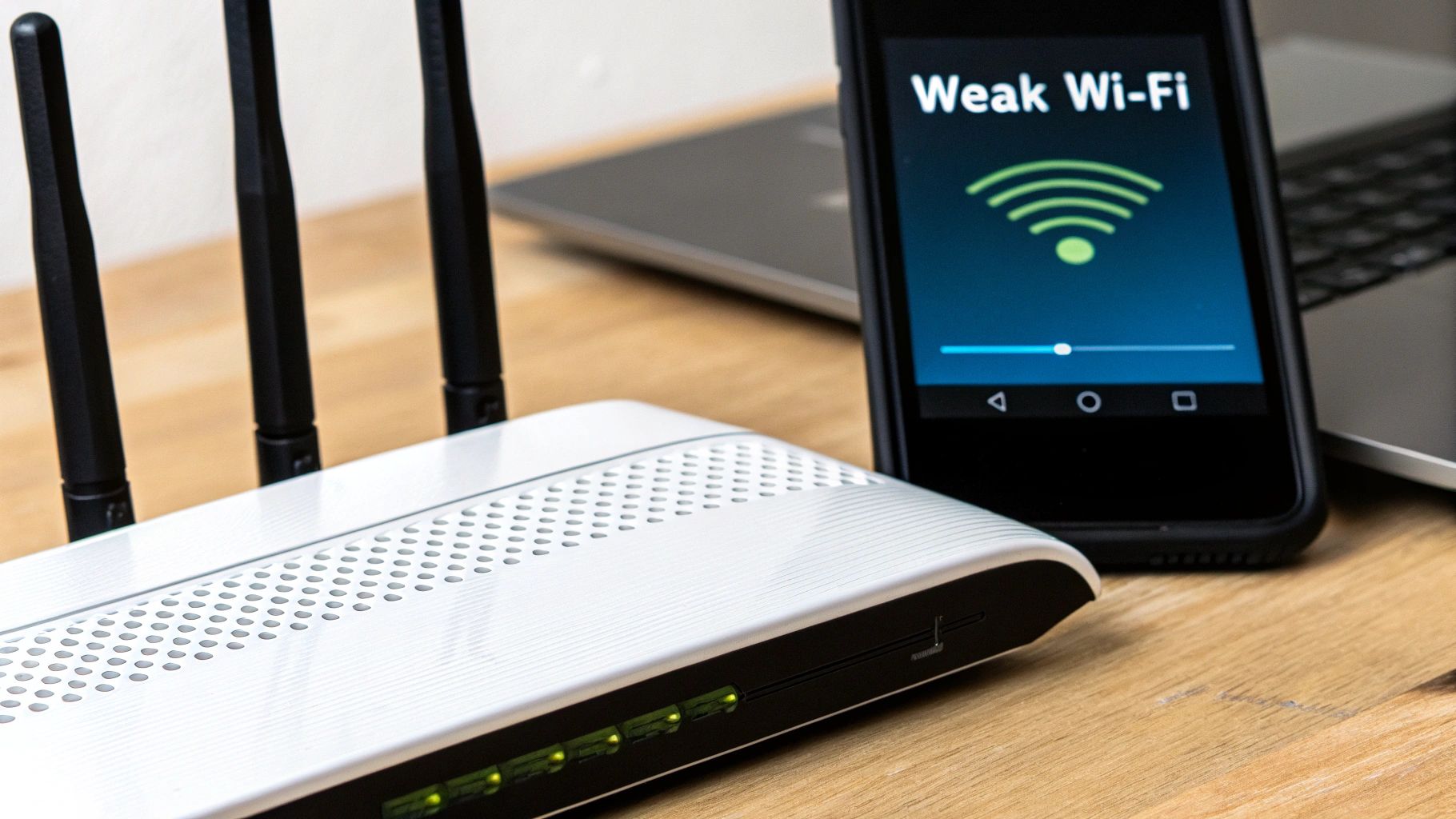

A classic example is a weak or spotty Wi-Fi signal. You might be paying for a lightning-fast internet plan, but if your laptop is on the other side of the house from the router, that connection becomes unreliable. Data packets arrive slowly or get jumbled, effectively starving the video buffer.

It’s also possible that your device is the bottleneck. Streaming high-resolution video is hard work for a processor. If an older phone or an underpowered smart TV can’t decode the incoming video data fast enough, it will struggle to play it back smoothly. You’ll see pauses and stutters even with a rock-solid internet connection.

A few common client-side culprits include:

- Outdated hardware: Older devices may simply lack the processing horsepower for modern, high-bitrate streams.

- Wi-Fi congestion: In a busy household, multiple phones, tablets, and computers all competing for bandwidth can slow things down for everyone.

- Background processes: That big software update downloading in the background? It’s eating up bandwidth and CPU cycles, leaving less for your video.

Network Congestion: The Internet Traffic Jam

Once the video data leaves the comfort of your home network, it has to navigate the wild, unpredictable public internet. This is where network congestion becomes a huge factor. The best way to think about it is like a massive highway system. During peak hours—say, a Friday night when everyone is streaming the latest blockbuster—those digital highways can get completely gridlocked.

This “traffic jam” doesn’t mean your personal internet connection is slow. It just means the specific route the video packets are taking from the server to you is clogged. Data gets delayed or dropped entirely, forcing a re-send and bringing your stream to a screeching halt.

Rebuffering from network congestion often feels random. A stream can be flawless one minute and a buffering mess the next, simply because a major network path between you and the server suddenly got overloaded.

This is one of the biggest challenges in video delivery. Your home network might be a clear country road, but it eventually merges onto a superhighway that can get jammed without any warning.

Server-Side Limitations: The Bottleneck at the Source

Finally, the problem might be right at the source. A streaming provider’s infrastructure has to be powerful enough to serve video to thousands, or even millions, of simultaneous viewers.

If the origin server—the computer where the original video file is stored—gets slammed with too many requests, it just can’t send data out fast enough. This creates a bottleneck before the video even starts its journey. A well-designed Content Delivery Network (CDN) is built to prevent this, but a poorly configured one can cause its own set of headaches.

Another critical server-side issue is improper video preparation. The files need to be encoded and transcoded into multiple quality levels to adapt to different viewer conditions. If these versions aren’t optimized correctly, the files might be too large for a slow connection or incompatible with certain devices. To see how this all works under the hood, check out our guide on what is video transcoding. It’s a crucial step for creating a smooth, adaptive stream.

In the end, a stable, buffer-free stream depends on every single link in the chain working perfectly. A weak link anywhere—from the server to your screen—leads to the same frustrating result.

To help pinpoint the problem, here’s a quick guide to troubleshooting common issues from both the viewer’s and the developer’s perspective.

Troubleshooting Common Rebuffering Issues

| Issue Category | Common Cause | Symptom | Potential Solution |

|---|---|---|---|

| Client-Side | Weak Wi-Fi Signal | Frequent, short buffering cycles; quality drops often. | Viewer: Move closer to the router or use a wired connection. Developer: Offer lower bitrate options in your ABR ladder. |

| Client-Side | Old/Underpowered Device | Stuttering or freezing video, even with a strong connection. | Viewer: Close background apps or try a different device. Developer: Ensure video codecs are widely supported. |

| Network | Internet Congestion | Buffering occurs at specific times (e.g., evenings) and is inconsistent. | Viewer: Try streaming during off-peak hours. Developer: Use a multi-CDN strategy to route traffic around congested areas. |

| Server-Side | Overloaded Origin Server | All users experience buffering at the same time, especially during popular live events. | Viewer: Not much you can do here. Developer: Scale server infrastructure and optimize CDN caching rules. |

| Server-Side | Poorly Optimized Video | Video takes a long time to start or buffers frequently on mobile networks. | Viewer: Switch to a lower quality setting if available. Developer: Review transcoding settings to ensure bitrates are appropriate for target networks. |

Diagnosing the exact cause of rebuffering can be tricky, but by systematically checking each part of the delivery chain, you can isolate the bottleneck and take steps to fix it.

How Adaptive Bitrate Streaming Fights Buffering

Instead of fighting the internet’s unpredictable nature, modern streaming services learned to work with it. The main tool in the fight against that dreaded spinning wheel is a technology called Adaptive Bitrate Streaming (ABR). It’s a beautifully simple idea that completely changed how we watch video online.

Think about driving a car that’s stuck in one gear. It would be awful in city traffic and terrible on the highway. ABR is like a car’s automatic transmission; it intelligently shifts gears to match the “road conditions” of your internet connection at that exact moment.

This technology gets rid of the old, one-size-fits-all approach of sending a single, high-quality video file to everyone. That method was a perfect recipe for a frustrating streaming video buffer experience, since any tiny dip in network speed would bring everything to a halt.

The ABR Workflow Explained

The magic behind ABR happens long before you press play. When a video is first prepared for streaming, the original high-resolution file is encoded into several different versions, often called “renditions.” Each of these renditions has a different quality level and, crucially, a different file size (or bitrate).

This process creates what’s known as a “bitrate ladder,” which might include a range of options:

- A stunning 4K version for someone with a blazing-fast fiber connection.

- A solid 1080p version for viewers on standard broadband.

- A dependable 720p version for those on less stable Wi-Fi.

- A lightweight 480p or 360p version for watching on a phone over a cellular network.

When you start streaming, your video player gets to work. It quickly sizes up your network conditions—looking at speed, latency, and stability—and picks the highest-quality rendition it can download reliably without forcing a pause.

The core idea behind ABR is simple: continuous playback is more important than perfect quality. The system would rather seamlessly drop to a lower resolution for a few seconds than make you stare at a loading icon.

This constant, dynamic adjustment is the secret to stopping most buffering before it starts. Your player keeps an eye on your connection, and if it senses things are slowing down, it can instantly switch to a smaller, less demanding video chunk for the next segment. You might see a brief dip in picture clarity, but the video keeps playing.

Why ABR Is the Industry Standard

The impact of ABR is impossible to miss. Recent data shows that in the first half of 2024, the buffer ratio for on-demand video dropped by an impressive 54% compared to the same period in 2023. This massive improvement shows just how well these adaptive strategies create a better experience for viewers—a critical factor in a streaming market projected to fly past $1.9 trillion by 2030.

The benefits of using an ABR strategy are clear, creating a win-win for both the people watching and the companies providing the stream. For a more detailed breakdown, you can read our complete guide on adaptive bitrate streaming.

- For Viewers: You get the best possible quality your connection can support at any given moment. This means fewer interruptions and a much smoother, more enjoyable watch party.

- For Providers: It lets them reach a huge audience with all kinds of connection speeds using just one set of video files. This cuts down on technical headaches and, more importantly, keeps viewers happy and coming back for more.

By automatically adjusting to real-world internet conditions, ABR makes sure the video buffer stays comfortably full. It turns what could be a jarring, stop-and-start session into the seamless experience we’ve all come to expect from modern streaming.

Optimizing Delivery with a Content Delivery Network

While Adaptive Bitrate (ABR) streaming is a smart way to handle shaky internet connections, it doesn’t solve a much bigger, more physical problem: distance. Every single byte of video data has to travel from a server to a viewer’s screen. The farther it has to go, the higher the odds of delays that can quickly empty that precious streaming video buffer.

This is exactly where a Content Delivery Network (CDN) steps in. Think of a CDN as a global network of digital warehouses for your video files. Instead of one single “origin” server in, say, Virginia, trying to serve a video to the entire world, a CDN copies that video and places it in hundreds of smaller servers across the globe.

So, when a viewer in Tokyo hits play, their request doesn’t have to cross the Pacific Ocean to Virginia. Instead, it gets routed to the closest CDN server—maybe one just down the road in Tokyo. This simple change drastically reduces the data’s travel time, a factor we call latency.

The Core Function of a CDN

At its heart, a CDN’s job is to close the gap between your content and your audience. By caching (which is just a fancy word for storing a temporary copy of) your video files on servers physically closer to your viewers, it creates a much faster and more dependable delivery route.

This geographic advantage has a direct impact on buffering. With lower latency, the first chunks of video arrive almost instantly, so playback starts sooner. Just as important, all the following chunks get there faster and more consistently, making it far easier for the player to fill the buffer and keep it full.

For any platform with a global or even regional audience, a CDN isn’t a luxury; it’s essential infrastructure. It’s what separates a frustrating, lag-filled stream from a smooth, professional broadcast. Finding the right setup is key, as we explore in our guide to choosing the best CDN for video streaming.

A CDN effectively decentralizes video delivery. Instead of creating a single potential point of failure with one origin server, it distributes the load across a vast, resilient network, ensuring high availability and speed for every viewer, no matter where they are.

This distributed model doesn’t just make things faster; it makes them more reliable. If one server in the network crashes or gets overloaded, traffic is instantly rerouted to the next-best option, often without the viewer ever knowing there was a problem.

How a CDN and ABR Work Together

A CDN and ABR streaming aren’t competing technologies. They’re two sides of the same coin, working in tandem to stamp out buffering for good. They create a powerful one-two punch that tackles both the “distance” and “speed” parts of the delivery puzzle.

Here’s how they team up:

- Request: A viewer presses play. The CDN instantly sends their request to the nearest server.

- Assessment: The viewer’s video player talks to that local CDN server, figuring out the quality of the connection between them.

- Delivery: Based on that assessment, the player asks for the right quality version from the ABR ladder, which is already stored and ready to go on that nearby server.

This synergy means the player isn’t just getting the right-sized video file for its connection (thanks to ABR), but it’s also getting it from the fastest possible location (thanks to the CDN).

These kinds of technical improvements are what’s fueling the explosive growth of the video streaming market. While North America is the current leader, the industry is expanding at a breakneck pace worldwide. Some projections even suggest the market could be worth nearly $3 trillion by the mid-2030s. As platforms roll out hybrid models mixing subscriptions and ads, keeping viewers engaged with buffer-free streams will be more critical than ever.

Ultimately, this powerful combination is the modern standard for delivering a superior streaming experience at scale.

Solving Buffering Challenges for Live Events

Live streaming is the ultimate high-wire act in video delivery. Think about it: when you watch a movie on-demand, your device can quietly download and store several minutes of video in its streaming video buffer. This gives you a huge safety net. But with live events, that safety net is tiny.

The whole point of live is to minimize latency—the gap between something happening in the real world and you seeing it on screen. To keep that delay as short as possible, the buffer might only hold a few seconds of video. This means any hiccup in your internet connection can drain the buffer in a flash, leading to that dreaded spinning wheel that yanks you right out of the moment.

For massive events like the Super Bowl or a major product launch, where millions of people are watching at the exact same time, this becomes an incredible technical challenge.

The Live Streaming Triple Threat

To pull off a broadcast-quality live experience, you need a system where everything is perfectly in sync. It’s not about having one great component; it’s about having a seamless workflow where every piece protects that fragile video buffer.

Here are the three must-haves for success:

- Efficient Transcoding: The raw video feed has to be encoded into multiple quality levels for ABR, and it has to happen instantly. Any delay here adds to the overall latency.

- Intelligent ABR: The video player needs lightning-fast reflexes. It must sense network slowdowns in milliseconds and switch to a lower-quality stream before the buffer runs dry.

- A Robust CDN: The Content Delivery Network has to be a fortress, capable of handling massive, sudden traffic spikes without flinching. It needs to deliver video from a nearby server with almost zero delay.

In a live stream, there’s no room for error. A single bottleneck in transcoding, ABR logic, or CDN performance can trigger a cascade of buffering issues for thousands of viewers at once, ruining the shared experience.

This tightly integrated approach is the only way to meet the sky-high expectations of a live audience. And the stakes are only getting higher. The live streaming space has seen explosive growth, fueled by better internet and the fact that mobile devices now make up about 60% of all live stream views.

The market is set to rocket from $65.97 billion in 2024 to $338.17 billion by 2033. In this competitive landscape, a smooth, buffer-free stream is the key to grabbing—and keeping—your audience. You can learn more about the expanding live streaming market and its projections.

When a live stream works perfectly, it feels effortless to the viewer. But behind the scenes, it’s a high-stakes balancing act. This is exactly what platforms like LiveAPI are built for—managing this complexity by combining fast transcoding, smart ABR, and a powerful CDN to make sure the on-screen action never has to stop, no matter how big the crowd gets.

Frequently Asked Questions About Video Buffering

Even when you understand the mechanics, a few common questions always seem to come up when you’re in the trenches dealing with video streaming. Let’s tackle some of the most frequent ones to help you connect the dots and get to the root of buffering issues faster.

Can I Increase My Video Buffer Size to Stop Buffering?

Technically, you might find some workarounds to force a larger buffer on your device, but it’s not a real-world fix for viewers. Think of it this way: a bigger buffer tank takes longer to fill. That means you’re just trading mid-stream interruptions for a painfully long wait before the video even starts.

Modern streaming has much more elegant solutions. Adaptive Bitrate (ABR) streaming, for instance, is a game-changer. It intelligently adjusts the video quality on the fly to match your connection speed, making sure the buffer never drains in the first place. This is about preventing the problem, not just patching it with a bigger buffer.

The goal isn’t just a bigger buffer; it’s a smarter buffer. Effective streaming platforms ensure the buffer is constantly and efficiently replenished, making its absolute size less of a concern.

Is Buffering My Internet Provider’s Fault or the Streaming Service’s?

Honestly, it could be either—or even a bit of both. The bottleneck could be right in your home network, somewhere out on the public internet, or an issue with the streaming service’s own setup.

A good first step is always to run a quick internet speed test. If your speeds are way below what you’re paying for, your provider is a likely suspect. But if your connection is zipping along just fine, the problem probably lies with the streaming service, maybe with their servers or the Content Delivery Network (CDN) they rely on to get the video to you.

Why Does Live Streaming Buffer More Than On-Demand Video?

Live streaming is a high-wire act. The entire goal is to slash the time between something happening in the real world and you seeing it on your screen—what we call latency. To keep that delay down to a few seconds, a live stream has to operate with a tiny streaming video buffer, often holding just a few seconds of video.

On-demand video has the luxury of pre-loading minutes of content, building a huge safety cushion against network hiccups. A live stream’s much smaller buffer means it’s incredibly sensitive to any dip in your connection. Even a brief network flutter can be enough to drain that small reserve and trigger a frustrating rebuffering screen.

Ready to build a streaming application without the buffering headaches? LiveAPI provides developers with robust tools for transcoding, ABR, and global CDN delivery, ensuring a flawless viewing experience for your audience. Start building with LiveAPI today.

![Best Live Streaming APIs: The Developer’s Guide to Choosing the Right Video Streaming Infrastructure [2026]](https://liveapi.com/blog/wp-content/uploads/2026/01/Video-API.jpg)